randomness is clumpy

In the previous section, I described systematic sampling as “sampling with a system.” Why do you need a system at all?

Well, this brings us back to bias which, although it isn’t always a bad thing, frequently is. Human beings are terrible at being fair, even when they’re trying to be.

In particular, they are terrible at understanding what randomness is.

An exercise I like to use with students is to ask them to write down a sequence of 100 random digits generated from their own heads. Two things typically happen. First, they find it surprisingly hard. Initially they write their digits quite quickly, but they soon slow down. This is because they’re thinking: they’re trying to make the digits look random. (Sometimes they just give up and start writing down sequences they already know, like phone numbers.)

But the second thing that happens is that they fail to construct a mathematically convincing list of random numbers. About one time in ten you would expect the same digit to be repeated — for example, in the sequence {1, 6, 4, 8, 8, 2, 0, 9, 1} there are two 8’s next to each other, a double. In a list of 100 random digits, you’d expect about ten such doubles. Typically students will generate far fewer than this. You’d also expect to see one example (on average) of a triple in a set of 100 random digits. In a class of students, this very rarely happens. Indeed, in a class of 30 students, you’d expect to see about three examples of a quadruple. This never seems to happen because it just doesn’t feel random.

So what does randomness actually look like? Well, it’s clumpy.

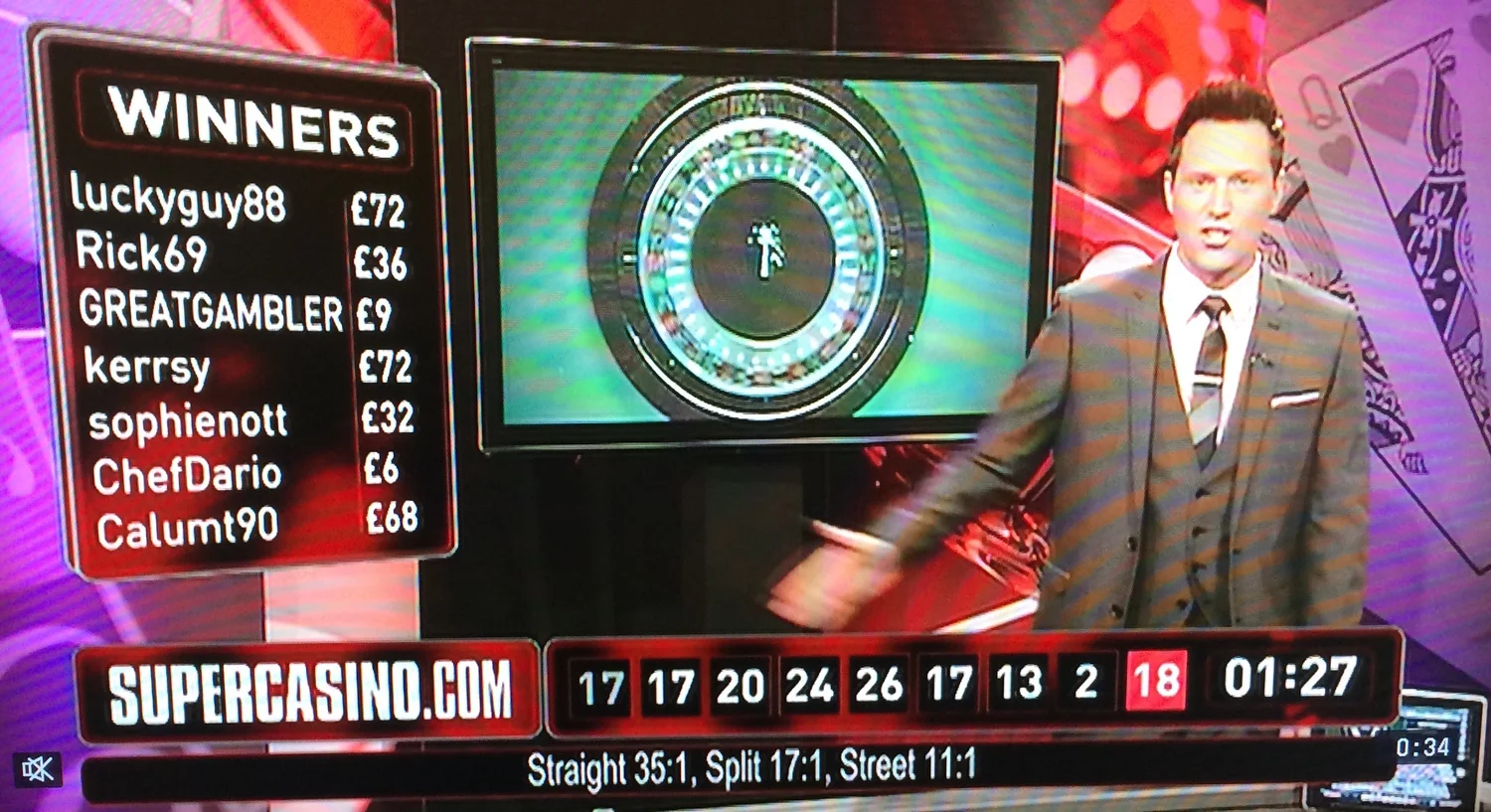

The above screenshot shows an interesting point in a sequence of games of roulette. The results of the previous nine spins are shown at the bottom of the screen in reverse order, so black 17 was the most recent.

Above this we see the bets that have been made on the next spin. Note the huge stack of chips on red (circled) compared with the tiny stack betting on black. The gamblers clearly believe that a red is “overdue”, since the last seven spins have all been black. This is an example of the so-called gambler’s fallacy. Probability theory tells us that a black is equally likely on the next spin. We sometimes say that the roulette wheel has no memory: it doesn’t know what the outcome of the last spin was — it’s not keeping count and making everything even out.

So what did happen next?

Not only was the next spin a black, it was black 17 again. In fact, black 17 had come up three times in the last nine spins.

This is a great example of how clumpy randomness is. People tend to associate randomness with evenness. In the very long run they’re right. In a very large number of spins, you would expect to see black about half the time, and black 17 about one time in 37. But in the short run, you often get clumpy results such as this. (It really does have to be a very long run. If you look at the UK National Lottery statistics, you’ll see that there is considerable variation in the number of times each of the original 49 balls has come up: as I write this, 40 has come up 321 times, but 13 has only been drawn 245 times. I’ll say more about short run vs. long run when I tell the story of John Kerrich’s coin tossing experiment in the next section.)

It’s because clumpiness doesn’t feel random that Apple had to fiddle the shuffle feature in iTunes to make it seem random by avoiding playing two songs by the same artist one after the other.

And here’s my favourite statistical cartoon. Is the troll really generating random numbers? Maybe . . .